Understanding what affects beauty through the pirouette of a dancer

An in-depth analysis of a ballet dancer’s movements could hold the answer to how we distinguish whether someone has grace and beauty, Queen Mary, University of London researchers suggest.

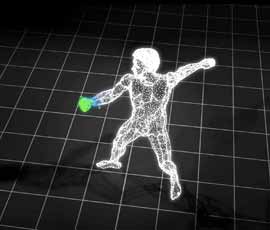

Italian ballet dancer Roberto Bolle in digital form

A team of scientists from Queen Mary’s School of Electronic Engineering and Computer Science and Massachusetts Institute of Technology (MIT) worked with renowned Italian ballet dancer Roberto Bolle to capture his movements using a variety of new technologies.

They were able to create a digital pixilated formation of Bolle using 3D body scanning, motion capture and digital conversions with the aim of studying the body in new ways.

“We know that people are sensitive to changes in human movements at the millimeter and millisecond level,” said Pat Healey, Professor of Human Interaction and lead for the Interaction, Media and Communication Research Group at Queen Mary.

“Motion capture technologies make it possible, for the first time, to analyse human movements in full 3D at very high resolutions. Modeling human movements at this unprecedented level of detail can help us to understand what affects people's perceptions of grace and beauty."

The project, Dancing Atoms, was conducted at Queen Mary’s Pinter Studio where scientists converted Bolle into a digital copy. One million digital pieces of his body were created to form a full 3D, 360 degree copy. The body scanner mapped Bolle’s co-ordinates to replicate both his shape and colour.

Adam Pruden, project lead and research fellow with MIT’s SENSEable City Lab, said: “What better way to study the body than through the spatial mapping of a ballet dancer?

“Greater analysis and understanding of our bodies in space is necessary as technology becomes more integrated into the building infrastructure, and as we increasingly use our bodies as an input to control objects around us.”

Bolle wore a black bodysuit with reflective markers on his body which allowed Queen Mary’s Vicon motion capture system to record his movement through space.

Stuart Battersby, PhD student in Human Interaction at Queen Mary, University of London, said: “When we motion captured Roberto, our system consolidated all the 2D images from each infra-red camera in our 12 camera array, allowing us to know the precise 3D coordinates of each reflective marker on Roberto's body in the 3D space.

“This gave us the precise X, Y and Z coordinates for the 42 markers on his body, recorded 120 times a second.”

Director of SENSEable, Professor Carlo Ratti, said: “Think about the ‘Wonka vision’ scene from Willy Wonka and the Chocolate Factory where the boy is photographed by a giant camera and split-up into millions of tiny pieces, before whizzing through the air.

“We see Dancing Atoms as a project where we can start to manipulate these millions of tiny pieces by controlling each bit as if it were a digital atom.”

In creating a digital copy of Bolle, the scientists hope the analysis will lead to a greater understanding of the key aspects of the human body’s movement capabilities and how they relate to people’s perceptions of grace and beauty.

The Dancing Atoms project was developed by Adam Pruden and Carlo Ratti of SENSEable City Lab in collaboration with Roberto Bolle, Italy’s étoile ballet dancer; Pat Healey, Stuart Battersby, Arash Eshghi and Nicola Plant of The Interaction, Media and Communication Group at Queen Mary, University of London; KevStenning of Rapido3D; Sanders Hernandez and EunSun Lee of CMYK+WHITE.

Related items

27 June 2025

For media information, contact: